|

8/12/2023 0 Comments Best way to use stockfish chess

LLMs, notes Lodge, “are not designed to iterate or goal-seek. Reinforcement learning deliberately iterates toward the desired goal and aims to produce the best answer it can find, closest to the goal. In the case of mathematical and physical problems, there may be only one correct answer, and the likelihood of generating that answer may be very low.”īy contrast, AI driven by reinforcement learning is much better at producing accurate results because it is a goal-seeking AI process. The reason comes down to the fundamental nature of LLMs, as noted in an OpenAI forum: “Large language models are probabilistic in nature and operate by generating likely outputs based on patterns they have observed in the training data. Making language models bigger doesn’t magically solve these hard problems, and even OpenAI says that larger models are not the answer. Math is one of those GPT-4 is better than GPT-3 at performing addition but still struggles with multiplication and other mathematical operations. “The only problem is that GPT-4 continues to struggle with the same tasks that OpenAI noted were challenging for GPT-3,” Lodge argues. Hence, even larger models will be better. GPT-4 is better at some language tasks than GPT-3 because it is larger. It’s why, he argues, “‘prompt engineering’ is not a thing.” It’s also a struggle for AI researchers to prove that “emergent properties” of LLMs exist, much less predict them, he stresses.Īrguably, the best argument is induction. When faced with evidence that LLMs significantly underperform other types of AI, proponents argue that LLMs “will get better.” According to Lodge, however, “If we’re to go along with this argument we need to understand why they will get better at these kinds of tasks.” This is where things get difficult, he continues, because no one can predict what GPT-4 will produce for a specific prompt. AlphaGo is not infallible, but it currently performs better than the best LLMs today. AlphaGo has beaten go grandmasters in the past. It’s incredibly effective for game-playing. You can’t try every move (there are too many), but you can spend time searching areas of the move space where the best moves are likely to be found. The process is called probabilistic search. The effect is to conduct a search of possible moves. It uses that feedback to “follow” promising move sequences and to generate other possible moves. In the case of AlphaGo, the AI tries different moves and generates a prediction of whether it’s a good move and whether it is likely to win the game from that position. Reinforcement learning works by (smartly) generating different solutions to a problem, trying them out, using the results to improve the next suggestion, and then repeating that process thousands of times to find the best result. Google AlphaGo is currently the best go-playing AI, and it’s driven by reinforcement learning.

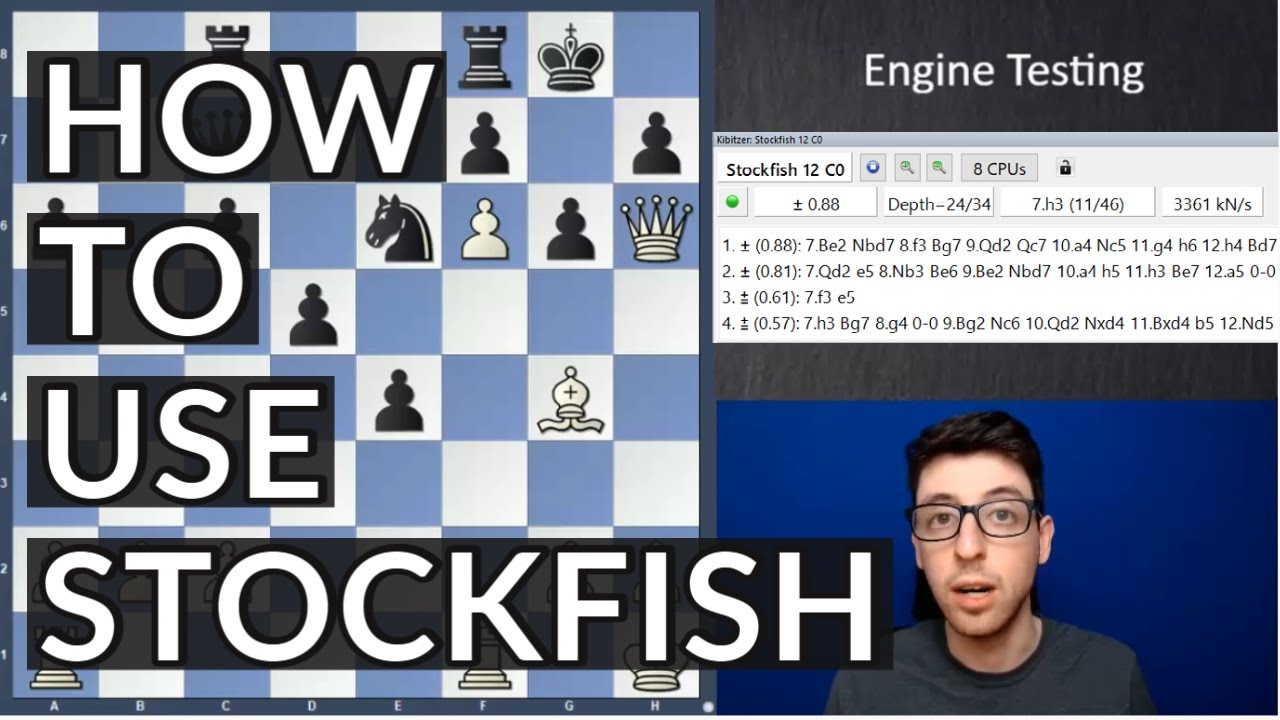

It’s an excellent demonstration that LLMs fall far short of the hype of general AI, and this isn’t an isolated example. The best open source chess software (Stockfish, which doesn’t use neural networks at all) had ChatGPT resigning in less than 10 moves after the LLM could not find a legal move to play. The model makes a series of absurd and illegal moves, including capturing its own pieces. Levy Rozman, an International Master at chess, posted a video where he plays against ChatGPT.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed